8 hours of instruction

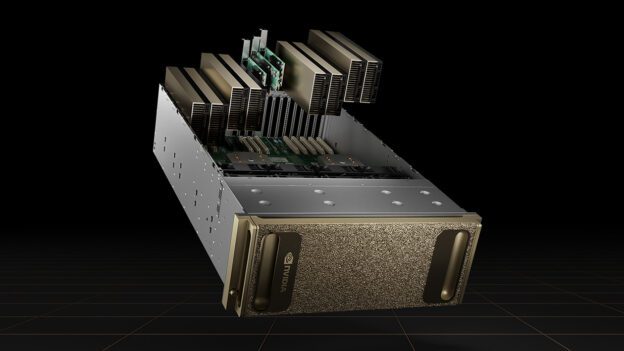

Learn the tools and techniques needed to write CUDA C++ applications that can scale efficiently to clusters of NVIDIA GPUs.

OBJECTIVES

- Learn several methods for writing multi-GPU CUDA C++ applications

- Use a variety of multi-GPU communication patterns and understand their tradeoffs

- Write portable, scalable CUDA code with the single-program multiple-data (SPMD) paradigm using CUDA-aware MPI and NVSHMEM

- Improve multi-GPU SPMD code with NVSHMEM’s symmetric memory model and its ability to perform GPU-initiated data transfers

- Get practice with common multi-GPU coding paradigms like domain decomposition and halo exchanges

- Explore scaling considerations for a variety of GPU-cluster configurations

PREREQUISITES

None

SYLLABUS & TOPICS COVERED

- Introduction

- Meet the instructor

- Create an account

- Multi GPU Programming Paradigms

- Use CUDA to utilize multiple GPUs

- Learn how to enable and use direct peer-to-peer memory communication

- Write an SPMD version with CUDA-aware MPI

- Introduction To NVSHMEM

- Use NVSHMEM to write SPMD code for multiple GPUs

- Utilize symmetric memory to let all GPUs access data on other GPUs

- Make GPU-initiated memory transfers

- Halo Exchanges With NVSHMEM

- Write an NVSHMEM implementation of a Laplace equation Jacobi solver

- Refactor a single GPU 1D wave equation solver with NVSHMEM

- Complete the assessment and earn a certificate

SOFTWARE REQUIREMENTS

Each participant will be provided with dedicated access to a fully configured, GPU-accelerated workstation in the cloud.

About Instructor

Login

Accessing this course requires a login. Please enter your credentials below!